Web scraping is simply the best way to collect vast amounts of data from websites in virtually no time. To bypass the challenge page, simply include both of these cookies (with the appropriate user-agent) in all HTTP requests you make.If you’ve been web scraping for a while, you already understand how valuable the practice is. Cloudflare uses two cookies as tokens: one to verify you made it past their challenge page and one to track your session. It's easy to integrate cloudscraper with other applications and tools. Or # will give you only mobile chrome User-Agents on Android scraper = cloudscraper. (string) 'linux', 'windows', 'darwin', 'android', 'ios'Įxample scraper = cloudscraper. ParametersĬan be passed as an argument to create_scraper(), get_tokens(), get_cookie_string(). create_scraper ( allow_brotli = False )īrowser / User-Agent Filtering DescriptionĬontrol how and which User-Agent is "randomly" selected.

Parameters ParameterĮxample scraper = cloudscraper. create_scraper ( disableCloudflareV1 = True )īrotli decompression support has been added, and it is enabled by default. If you don't want to even attempt Cloudflare v1 (Deprecated) solving. Options Disable Cloudflare V1 Description cloudScraper works identically to a Requests Session object, just instead of calling requests.get() or requests.post(), you call scraper.get() or scraper.post().Ĭonsult Requests' documentation for more information. You use cloudscraper exactly the same way you use Requests. You don't need to configure or call anything further, and you can effectively treat all websites as if they're not protected with anything. Websites not using Cloudflare will be treated normally. text ) # => "."Īny requests made from this session object to websites protected by Cloudflare anti-bot will be handled automatically. create_scraper () # returns a CloudScraper instance # Or: scraper = cloudscraper.CloudScraper() # CloudScraper inherits from requests.Session print ( scraper.

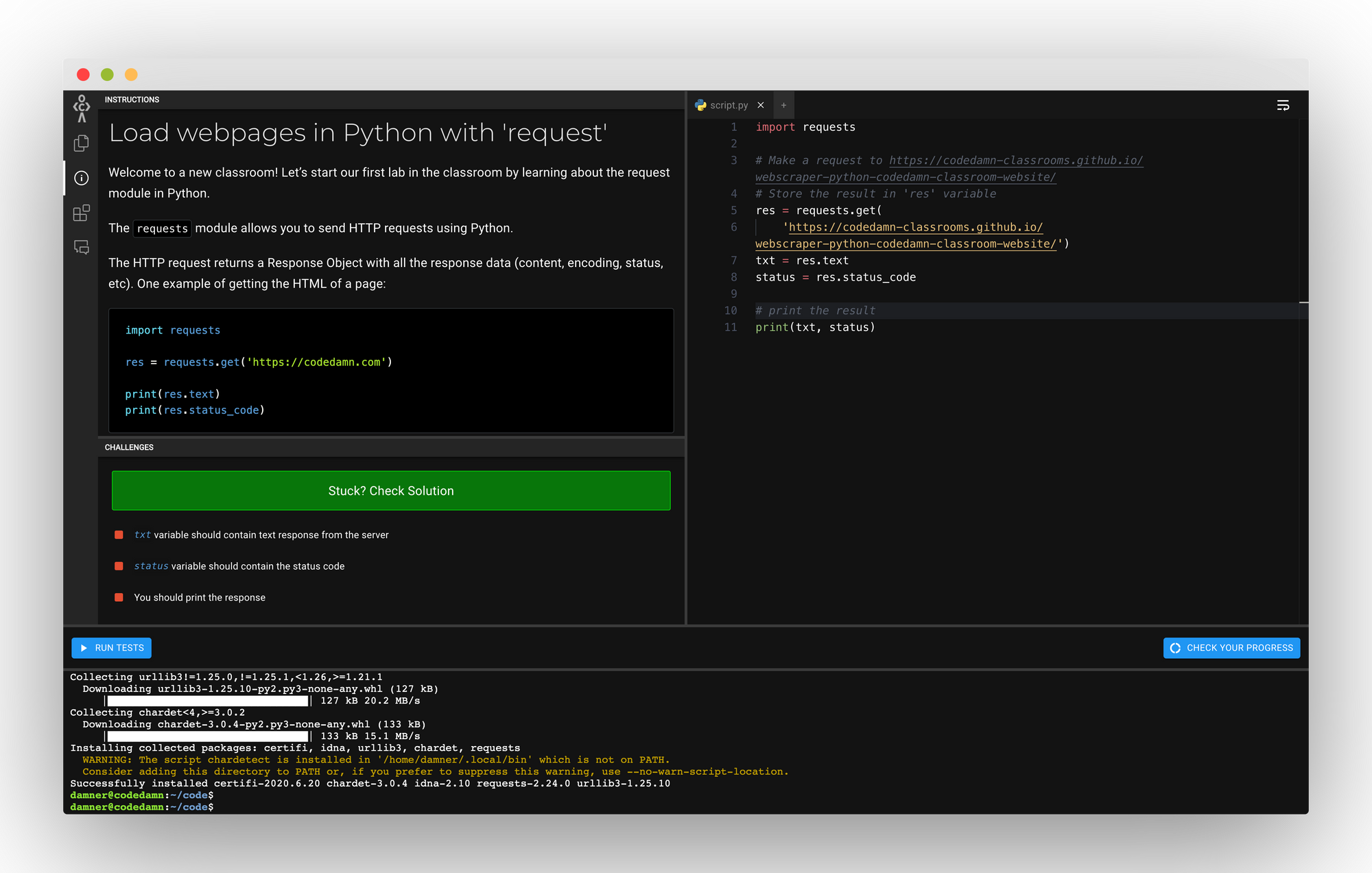

import cloudscraper scraper = cloudscraper. The simplest way to use cloudscraper is by calling create_scraper().

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed